Developer Workflow Research

I sat on a design and research team that supported all 40+ products in Amazon's developer tools org — which meant I could see things no single product team could. I co-led the first cross-tool qualitative study of how 80,000+ engineers actually ship code, following 18 builders through their full development cycle. The findings reshaped how leadership prioritized investment, sent 15 product teams new work, and became the foundation for an org realignment around workstreams.

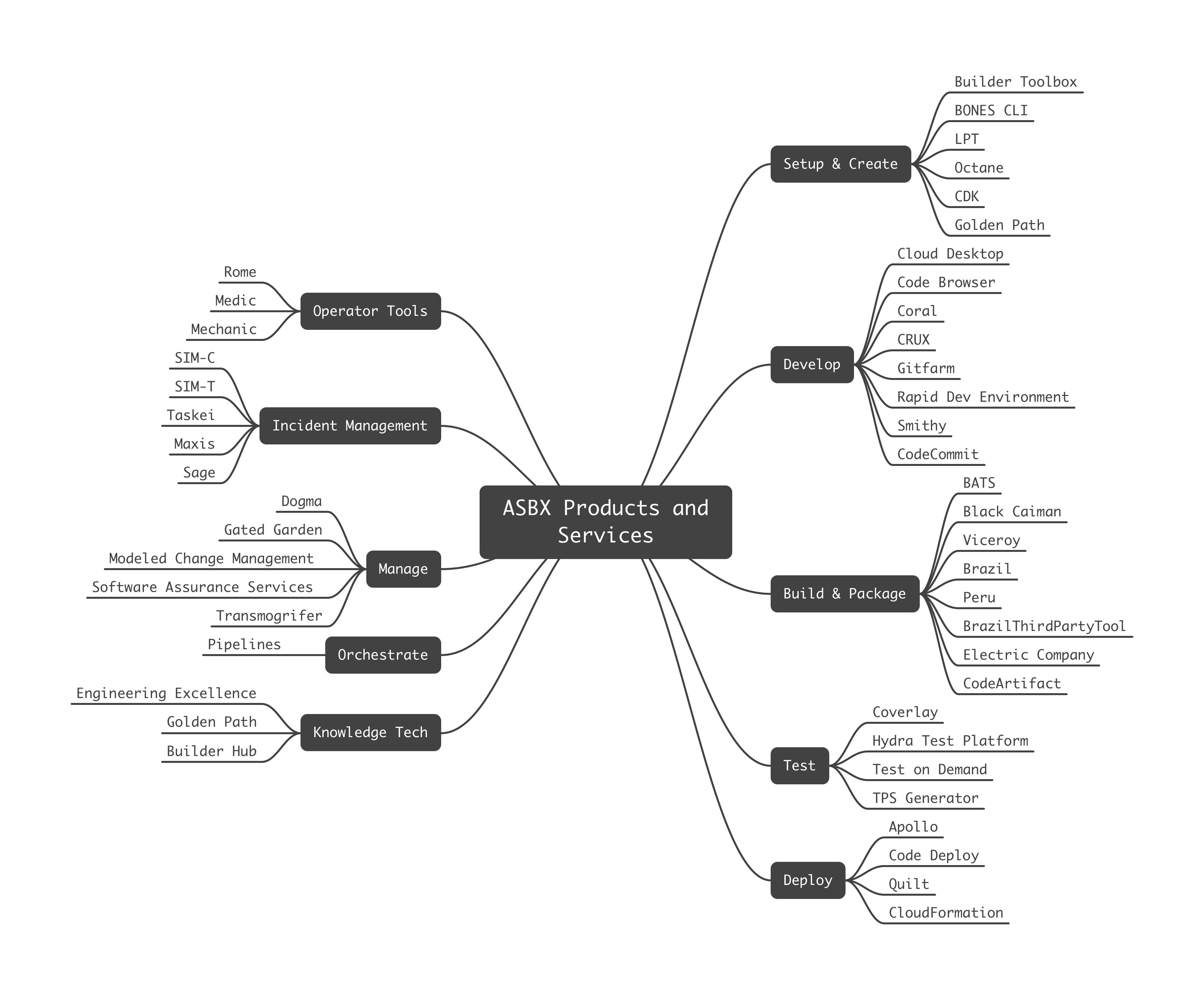

The system no one had mapped

Amazon consolidated 40+ developer tools under one organization in 2022, but teams were still organized by technical ownership, not by how builders actually work. Existing surveys measured satisfaction tool by tool. The systemic problems living between products were invisible. This was the gap the research had to close.

A shared mental model for leadership

The org operated with a linear mental model of the development lifecycle. Research showed the reality was cyclical, with an inner loop for individual development wrapped in an outer loop for release, operate, and evaluate. We co-designed a flywheel that mapped insights to specific phases and explicitly marked the areas the study didn't cover. It replaced tool-by-tool conversations with one view of how builders actually work.

A reference PMs could actually use

The flywheel gave leadership a way to think about the system. PMs needed something more specific: where does my product sit in a builder's day, and what surrounds it? We built a detailed workflow map from 18 interviews — the inner developer loop and build/deploy phases up top, with appendices zooming into code review and error diagnosis. Every step was annotated with the pain points we'd heard. The density is the message: this is what builders navigate every day.

Zooming In: Code Review

Each section of the workflow told its own story. Code review was one of the most complex — two parallel paths (submitting vs. reviewing), decision points around analyzer rules and approval, context switches while waiting for feedback, and error diagnosis branches that could send builders back through the entire loop. The callout annotations capture what we heard in interviews: workarounds, tool-specific friction, and moments where the process broke down.

“The review itself takes 20 minutes. Getting someone to do it takes three days.”

— SDE, from research interviews

The friction surfaced here became the starting point for follow-on work I later led with the Code Review team to address several of these specific issues.

What the research made possible

The most significant outcome wasn't a single finding — it was the follow-on work the research enabled. One of the first deep dives was a cross-org notifications workshop. Every tool sent alerts independently with no shared priority, so a deployment safety override and a marketing digest arrived through the same channel with the same weight. No single team owned this problem, and no single team could have surfaced it. The research gave the org both the evidence and the shared vocabulary to bring the right stakeholders into one room.

INFRA-3391 moved to In Progress

Build #4891 succeeded -- 847 tests passing

Pipeline approval required -- test run result: pass

Canary at 2% traffic -- no errors

#team-backend: Standup notes posted

Service outage / issues -- easily overlooked

#oncall: Handoff notes for next rotation

INFRA-3391 moved to In Progress

Build #4891 succeeded -- 847 tests passing

Pipeline approval required -- test run result: pass

Canary at 2% traffic -- no errors

#team-backend: Standup notes posted

Service outage / issues -- easily overlooked

#oncall: Handoff notes for next rotation

#team-backend: Anyone know who owns the auth config?

Test run failed -- approval workflow blocked

Weekly Builder Tools digest: 14 updates

Task status update: SIM-4201 closed

Pipeline config updated -- rebuild required

PR #1250: refactor auth middleware

Complete compliance training by Friday

#team-backend: Anyone know who owns the auth config?

Test run failed -- approval workflow blocked

Weekly Builder Tools digest: 14 updates

Task status update: SIM-4201 closed

Pipeline config updated -- rebuild required

PR #1250: refactor auth middleware

Complete compliance training by Friday

Service outage detected -- IsItDown banner active

PR #1247 approved -- ready to merge

Changes requested on PR #1244

3 packages with security patches available

New task opened: BACKEND-882

Production deploy queued -- 3 ahead

Service outage detected -- IsItDown banner active

PR #1247 approved -- ready to merge

Changes requested on PR #1244

3 packages with security patches available

New task opened: BACKEND-882

Production deploy queued -- 3 ahead

Notifications arrive from every tool independently. No shared priority, no coordination, no way to distinguish signal from noise.

The research gave a 40+ product org its first shared, evidenced view of the builder experience.

- •Leadership stopped having tool-by-tool conversations. The flywheel became the shared frame for prioritizing cross-cutting initiatives over product-specific fixes.

- •PMs could locate their product in a builder's actual day and tell which pain points were theirs to fix versus which lived in the gaps between products. Scoping conversations got concrete.

- •30+ documented patterns across three themes (knowledge transfer, dependency visibility, tool pains) gave the org evidenced problems to fund work against.

- •Teams across the org used the findings to prioritize and add work to their roadmaps. The design team supported follow-on work across dozens of products, and I personally partnered with 15 product teams — including Code Review, IDE, Pipelines, and Change Management — on design work that addressed friction the study had surfaced.

- •Triggered follow-on deep dives the org couldn't have scoped before: a cross-tool notifications workshop, and targeted UXR studies on IDE integration, code review, and developer onboarding.

- •Became the foundation for an org realignment around workstreams — the UXR team's later jobs-to-be-done model organized around Plan, Build, Deploy, Monitor/Operate, and Knowledge Discovery traces directly back to this study.